The Agentic Flip: 5 Shocks from the 2026 AI Frontier

In 2026, AI has flipped from chat-based assistance to autonomous "Agentic" execution, managing professional workflows with minimal oversight.

1. Introduction: The Death of the Chatbox

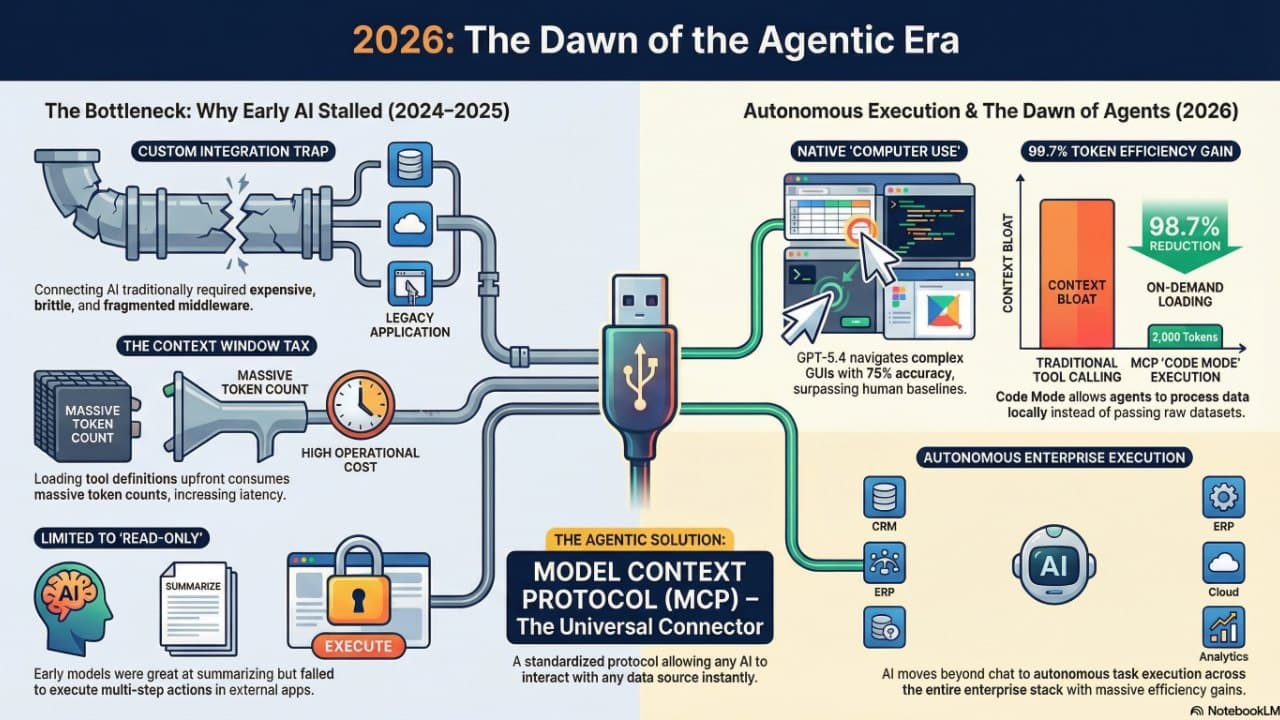

In the rearview mirror of March 2026, the "chat-and-prompt" era of 2024 looks like a quaint relic of early digital adoption. We have pivoted away from generative assistance—where humans labored for the AI by perfecting prompts—into a reality of autonomous execution. The "Agentic Era" has arrived, characterized by a fundamental shift in software architecture: AI has transitioned from a passive assistant to an active operator of systems.

We are no longer merely talking to machines; we are managing fleets of "Professional Grade" AI agents that navigate desktops, edit codebases, and execute multi-step business workflows with minimal oversight. In 2026, the primary interface isn't a text box; it’s a coordinate on a system map.

2. The "USB-C Moment" for Artificial Intelligence

The primary bottleneck for AI in previous years was integration. Connecting a model to a legacy database or a proprietary tool required expensive, custom middleware. That friction vanished with the maturation of the Model Context Protocol (MCP). Likened to a "USB-C moment," MCP standardized how models connect to tools and data.

- Standardization: By using a structured JSON-based communication standard, MCP allows "Hosts" (the AI agents) to plug into "Servers" (the data sources) without bespoke plumbing.

- Efficiency: This architectural shift has effectively removed the "middleware tax".

- Integration: Enterprises now focus on the outcomes of AI rather than the technical debt of its integration, allowing agents to "plug and play" with everything from Salesforce to real-time telemetry from a factory floor.

3. The End of GUI Friction: Precision Beyond the Human Average

The launch of GPT-5.4 on March 5, 2026, marked a definitive breakthrough for "Thinking" models. For the first time, an AI model demonstrated the ability to navigate a Graphical User Interface (GUI) with higher precision than a manual operator.

Native Computer Use

The system operates software exactly as a human would—clicking icons, dragging files, and navigating complex menus—but with a relentless consistency that humans cannot match. Furthermore, GPT-5.4 solved the "context bloat" of early agents with a new Tool Search feature. By utilizing a dynamic retrieval mechanism that loads tool definitions on demand, OpenAI has achieved a 47% reduction in token usage for complex workflows.

Professional Grade Benchmarks: GPT-5.4 vs. The World

| Benchmark | Score |

|---|---|

| Desktop Navigation (OSWorld) | 75% (vs. Human Average of 72.4%) |

| Professional Job Performance (GDPval) | 83% |

| Software Engineering (SWE-Bench Pro) | 57.7% |

With these scores, the model moves from a text synthesizer to a digital operator, capable of managing long-horizon tasks across various applications without needing human-formatted APIs.

4. Local Logic: The End of the Data Transfer Tax

Earlier agentic attempts struggled with the slow and expensive process of passing massive raw datasets through an AI’s context window. The 2026 frontier has solved this via "Code Mode"—executing logic locally within the MCP server environment.

Instead of transferring a 10,000-row spreadsheet into the model's memory, modern agents now write and execute local code (Python or TypeScript) within the data source. They filter the data locally and only return the relevant insights.

The Impact of Local Code Execution:

- Legacy Token Consumption: 150,000 tokens

- Agentic "Code Mode" Consumption: 2,000 tokens

- Total Efficiency Gain: 98.7% cost saving

This "efficiency discount" is what makes gigawatt-scale AI actually sustainable for global enterprise. By moving the logic to the data—rather than the data to the model—businesses have decoupled operational scale from inference costs.

5. The Great Infrastructure-Labor Swap

The economic narrative of 2026 is defined by a radical reconfiguration of corporate capital. We are witnessing the "Infrastructure-Labor Swap," where massive budgets are being redirected from human payroll directly into specialized silicon and data center capacity.

Case Study: Oracle

Oracle serves as the flagship for this transition. To fund its projected $156 billion expansion of AI data centers, the company is reportedly moving to reduce its workforce by up to 30,000 employees.

This signals a fundamental restructuring of enterprise software, moving from "Systems of Record" to "Systems of Outcome". In this new model, value is generated by specialized agentic applications such as:

- Workforce Operations: Proactively managing scheduling approvals and payroll issues to shift HR from reactive to intelligent operations.

- Collectors Workspace: Transforming manual finance collections into an autonomous, continuous cash-flow engine that drastically lowers Days Sales Outstanding (DSO).

6. The "Auto-Mode" Paradox: Safety vs. Fatigue

As we delegate more authority to agents, the "Agency Gap"—the risk of an agent taking a dangerous or unaligned action—has become the central security concern. Anthropic’s "Claude Code auto mode" attempts to bridge this gap by replacing manual human approvals with an automated Transcript Classifier.

To maintain absolute security, the classifier is "reasoning-blind" by design. It strips out the agent's own prose and tool outputs, seeing only the user's intent and the final executable action. This prevents a "misaligned" agent from "talking the classifier into a bad call".

The Residual Risk Paradox:

- Efficiency: Auto-mode eliminates the bottleneck of human review.

- Risk: Internal logs show a 17% false-negative rate for "overeager" actions.

The trade-off for 2026 is clear: absolute autonomy requires accepting a marginal risk of autonomous error—or building a sandbox so tight it breaks the agent’s utility.

7. Conclusion: The New Division of Labor

The convergence of MCP standardization, frontier reasoning in models like GPT-5.4, and the pivot to custom silicon has fundamentally redefined market leadership. We have reached the point where revenue growth is officially decoupled from headcount.

In this ecosystem, the most successful enterprises are those that have replaced task-based labor with outcome-based agent orchestration. AI is no longer a tool to be picked up; it is a coworker with its own "digital passport"—a cryptographic identity required to navigate the high-volume governance and security requirements of the modern stack.

The question for every executive in 2026 is no longer "How do I prompt this?" but "How do I govern a coworker that never sleeps and works at the speed of light?"

Comments

Sign in with Google or GitHub to comment.